I wrote this blog post as I ran into a Delphi coder who was using some non-verson-control tool like Zip or DropBox to store their code. Now I'm not saying you shouldn't ever store code in zip files or upload code to dropbox, but that should not be your source of truth. This is a highly opinionated fly through of the basics that a non-git user should be aware of.

WHY GIT? GIT IS THE STANDARD FOR SOURCE CONTROL

What is source control? Source control means having a single SOURCE OF TRUTH for what your code is. Ideally that single source of truth should be on a private or public repository hosted on a git server, like bitbucket, or gitlab.

Why GIT? There is literally nobody policing this, if you're a solo developer, pick whatever version control system you want, but if you pick anything other than Git, you're going to eventually probably regret it. There are network effects (an economics term, really) here. I'm not going to explain network effects. If you have to google that, go ahead.

What is a source of truth? Git provides a source of truth because all git commits have a "revision hash", a fingerprint that marks them uniquely. Git provides a way to say, for sure that if you have git commit A939B9.... (more digits omitted), you know exactly what content every single file in your produce source code contained. In order to be really sure about it you should actually also be using Continuous Integration instead of building things on your developer PC.

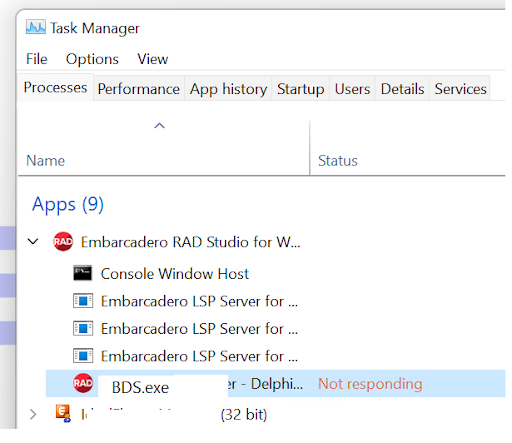

What is CI (continuous integration)? Another reason to use git is so that you can have your code built automatically whenever you change it and have that build happen in a controlled way, in a controlled and repeatable environment so that you actually know what you sent your customers, if you have customers. If you are working for a company that sells software, and the software binaries you put on customer PCs were built on your own desktop computer, and not on a build server, and your company is more than just one person, you also should be using Git, so you can get ready to be building your software from an automated build (CI) environment.

What are these unit and integration tests things people talk about? You have those right. If you have Git, and CI, and unit tests, you can be running those things every time your code builds. Are you beginning to see possibilities unfolding like flowers? If you are a single developer, having CI and unit testing, and continuous unit testing on every git commit, can really help you.

How do I get started? The rest of this post lays out a basic plan for getting started with Git.

Wait I have objections... Post them in the comments. I prefer subversion is an opinion, not an objection, but feel free to tell me that if you like.

Step 1. Sign up for an account on bitbucket.

Step 2. Download Source Tree. (The easiest Git client to learn, not the fastest, or most powerful, and not one you will stay with forever, SourceTree is good at holding new user's hands and getting them some critical early experience with version control, especially if you haven't used version control before and are coming from a background of using Zip or Dropbox to "back up" your code.)

Step 3. Create a dummy repository in bitbucket and clone it with source tree. Follow their tutorials.

Step 4. Create a delphi application with File -> New Application and commit that source code for the brand new main form right after the first time you save the project and main form and give them the NAME they are going to have for a while.

Step 5. Using your git GUI, Add and Commit the files to the local git repository. You have only now been working with your own files on your own hard drive so far.

Step 6. Push your commits up to the repo you created in bitbucket. Log into the bitbucket website and find the code on your bitbucket account in the repositories page.

Step 7. Go to a different computer (if you can) or a different folder on the same computer (if you only have one) and clone the repo to that location. Observe for yourself that Bitbucket is like a Transporter for Code. The code on my computer is now code on another person's computer. Yet another use for Git. Code transporter. Team coding enabler. Backup system. Historical log of what your code was over time. And a thousand other things. Once you have internalized and understood the value of even 5% of what a good version control tool can do for you, you will never work without one.

Step 8. Make the application do something. Fibonacci sequences. Sorting interview question. Anything. Once it works, Commit the working code, explain the code you wrote in your commit messages always. Get into good habits to start with so you don't have to learn the hard way. Now mess the code up on purpose. Now using your Git Client (SourceTree or others) find the change and DO NOT COMMIT it, instead revert it. You have now observed another use for Git. The Time Machine. Any time you move your code in a way that makes it worse, you can just revert the change before you commit it.

Step 9. Make a bad change and commit it even though you know you just broke your app. Now find the way to revert a commit (get your code back to the way it was before the bad commit). I'm not going to tell you what the command is called, because I want you to figure it out for yourself. So git can not only be a time machine BEFORE BAD COMMITS it can also be a time machine to get you back code after bad commits.

Step 10. Now learn about .gitignore. Do this before you import any real projects into git. Find out what .gitignore is and how to write expressions into it like *.dcu and *.local. Do not commit binaries (exes, dcus, dcps, bpls) into Git, unless you really really have to. In general, you should NEVER EVER commit a DCU for a .PAS file that is in the repo as you should be BUILDING that .DCU file from source. If your projects are badly configured (DCU Output folder is NOT set) then get used to setting the DCU Output folder option in Delphi to a sensible default for all your projects. Mine is .\dcu\$(Platform)\$(Config) so that I can have win32, win64, and even other non windows platforms all build and not have my dcus get mixed up. .gitignore is the place to code these dcu and exe output folders in so that when you add your source code into a git repo you do not commit the exes. If you really really want to store your ssl dlls in git, nobody is going to come and arrest you, but please for the love of the flying spaghetti monster, learn what .gitignore is and use it. Don't commit DCUs. Sermon endeth.

Step 11. After you understand git ignore then take your source code folder and do a git init there, from source tree. Add things in small chunks. Not 10K files in one commit. Add one top level folder that contains less than 50-80 megabytes of content, at a time, if you can. Trust me you'll be glad you did this, if you have a 1 gigabyte project, please do not add 1 gigabyte of files in a single commit. After each commit, add more files until your repo is built. If you were previously using mercurial or subversion there are tools to keep your subversion and mercurial history and convert over to git. I'm not going to cover them but I know they exist and that they work as I've used a variety of them. I generally don't bother. I just start over with new history in git, and I go back and look at the old version control systems to see old versions.

Step 12. Get used to comitting all your code after you write it and writing GOOD COMMIT MESSAGES. A good commit message is a message that explains the purpose of your changes. If you are lazy here, your future self will hate you. You don't need that kind of rejection in your life. WRITE GOOD COMMIT MESSAGES NOW.

Step 13. Push after every commit until you have made it a habit.

Step 14. Delay using and learning about submodules, branching, tagging, and other topics in Git until everything I have said above is well ingrained and is part of your habits. Learn to use Diff tools, learn to merge, before you learn to branch. Imagine you learn to drive a car but you concentrated on learning how to drive fast before you're very good at steering and braking. Steering and braking are the ways you stay out of trouble. Merging, and diffing are ways to get out of trouble. Submodules, and branching are ways to get into it. Until you have the tools to sort yourself, just don't make the crazy advanced messes. Don't try to deploy and manage a Gitlab instance at cluster scale when you don't even know how to Diff two files, compare them, and solve merge conflicts.

I believe the above will be helpful as I've written it, but I reserve the right to amend the advice above if anyone can point out ways in which the above might lead a new Git user astray. The happy path, of a contented Git user, is a gradual growth in competence with a powerful tool.

Can git be frustrating? Yes. Is it complicated? Yes. Is it worth it to learn it? Also Yes.

Are most of us going to do things wrong, and learn everything the hard way? Yes, because you are human. It's okay.